For those diving into NAS sizing, I wanted to share this write up to help explain the roles of the Cache Repositories and Metadata. The sizing of these components varies depending on the type of backup storage you’re using. This consideration has become increasingly important with the addition of object storage support in v12.

A Cache Repository is a storage location where Veeam Backup & Replication keeps temporary cached metadata (folder level hashes) for the NAS data being protected. A Cache Repository improves incremental backup performance because it enables Veeam to quickly identify source folders that don’t have any changes via matching hashes stored in the Cache Repository.

When NAS backup is targeting a disk-based repository, the cache metadata is held in memory so very little if any storage on the Cache Repository is required, roughly 1 to 2 GB for disk-based repositories.

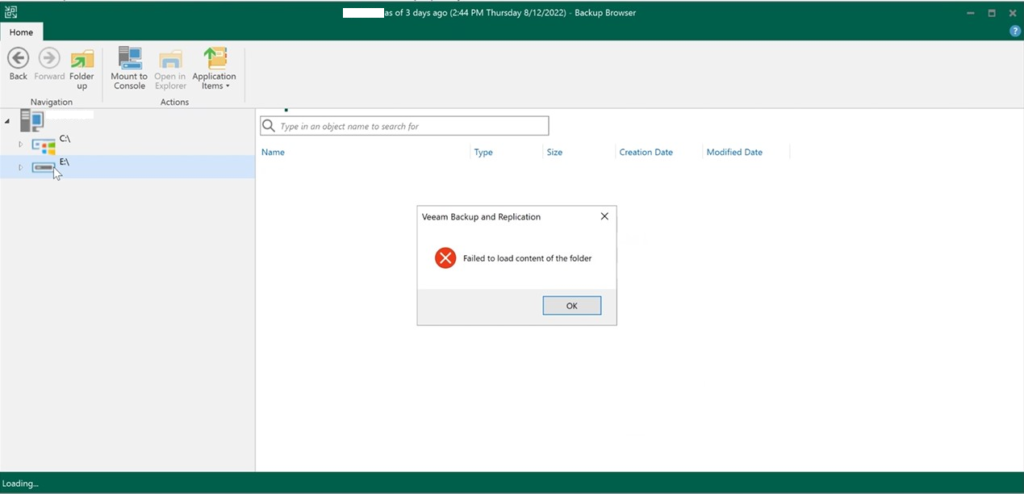

Not to be confused with cached metadata (folder level hashes) on the Cache Repository, Metadata is created by Veeam used to describe the backup files, source, files name version and pointers to backup blobs. Most often, when performing restore, merge, transform operations, Veeam interacts with this Metadata rather than with the backup data.

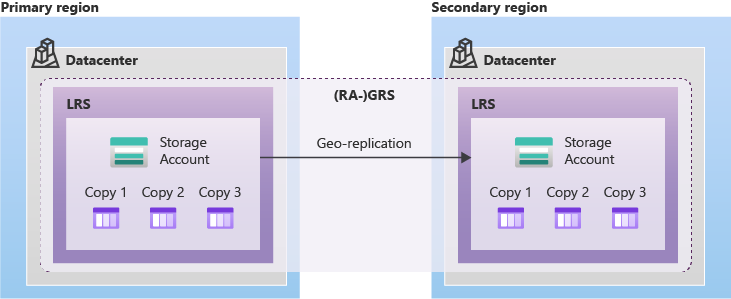

Metadata is always redundant (“meta” and “metabackup” or “metacopyv2”). The actual placement and number of metadata copies is dependent on the repository configuration and type. This is important to understand because the number and placement of Metadata will impact how much storage is required.

Continue reading