I was recently working on a VMware SRM solution utilising an IBM Storwize v3700 SAN at each site with remote mirror. I had configured a global mirror with change volume relationship over IP which works beautifully when both source and target SANs were in the same subnet/building. Once the target SAN was moved out of the building to the DR site, the IP SAN traffic went through the gateway across the WAN to the other site. The performance for the IP replication was pretty average, to say the least, out of a 100Mbps link, I could only achieve 1MBps, even though windows file copy would easily saturate the link providing 10MBps consistently.

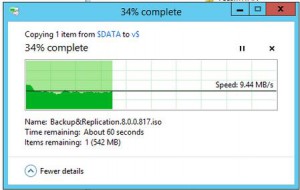

We ended up testing the link for packet loss and while it did show some packet loss, I had assumed that given that Windows file copy could achieve 10MBps then the Storwize SAN from IBM should be able to achieve similar results.

Well, it turns out that’s incorrect. As per the below snippet from the IBM System Storage SAN Volume Controller and Storwize V7000 Replication Family Services Redbook.

packet loss results in sever performance degradation well out of proportion to the number of packets actually lost. A link that is considered “high quality” for most TCP/IP applications might be completely unsuitable for the remote mirror.

Now, this helped explained why Veeam could perform Backup Copy to the DR site at a consistently fast 10MBps without any problems yet the remote mirror performed so badly.

After resolving the packet loss problem which was dodgy SFP+ fibre adapters and a couple cables, the remote mirror performance jumped straight to 10MBps and has stayed there consistently ever since.