I recently experienced a timeout error while offloading backups to a capacity tier (Azure BLOB). It occurred whenever Veeam offloaded large quantities of backup files simultaneously, typically any more than 6 backup files at a time would result in the offload failing.

This was a problem because the automatic SOBR offload process would process 40+ backup files at a time, most of which would fail until only 6 backup files remained in the queue, at this point the 6 remaining backup files would offload successfully. Typically there would be 250 or so backups in the offload queue, Veeam would offload these backup files for an hour until the timeout error occurred, then Veeam would start the next batch of 40 backups files to be offloaded.

Looking at the Veeam offload job logs (located in the main folder of the Veeam server logs, path ‘C:\ProgramData\Veeam\Backup\SOBR Offload’) we could see the following,

task example

[18.08.2019 11:21:23] <176> Info – – – – Response: Archive Backup Chain: b14e8dd9-2351-4236-bd54-a08339859d49_40f33f92-ca5a-45ac-a2ec-d674efd0383d

[18.08.2019 12:57:26] <844> Error AP error: WinHttpWriteData: 12002: The operation timed out

[18.08.2019 12:57:26] <844> Error –tr:Write task completion error

[18.08.2019 12:57:26] <844> Error Shared memory connection was closed

At the time of troubleshooting, Veeam was allocated less than 50Mbps for offloading backups to BLOB. A correlation between internet bandwidth and the number of parallel offloading backup files was found, increasing the bandwidth via QoS resulted in more successful backups being offloaded simultaneously.

Veeam was offloading 40 backups in parallel during SOBR offload jobs, this 40 number comes from the ‘Limit maximum concurrent tasks to <N>‘ setting on the performance tier extent configured in the SOBR. Given this extent was the target for a substantial backup copy job, the concurrent task limit was set to 40 (appropriate for the core count on the repo). Reducing the maximum concurrent tasks to 6 or less would stop the offload timeout error but backup copy performance suffered which meant the Veeam was no longer meeting the required RPOs.

To ensure Veeam was indeed the problem, Veeam was removed out of the picture. Leveraging Azure Storage Explorer to upload multiple backup files demonstrated no errors would occur.

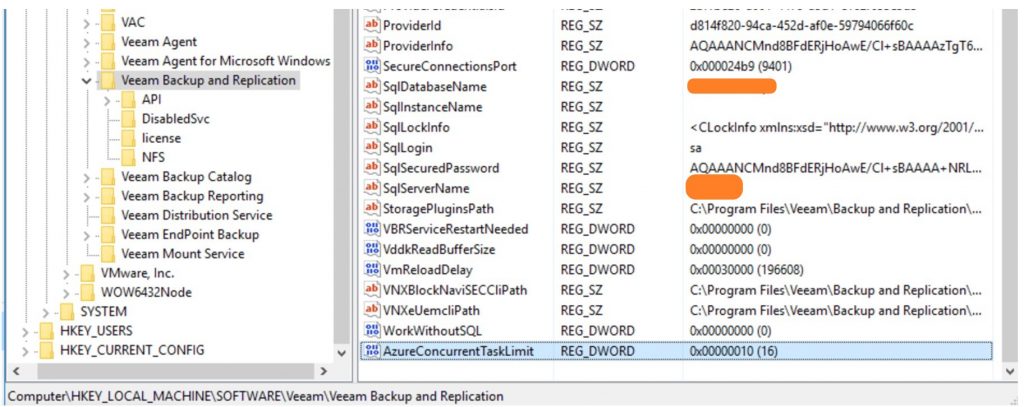

Veeam support suggested trying to reducing the number of Azure concurrent tasks, made possible via a registry tweak configured directly on the Veeam Backup Server. The registry path is as follows “HKEY_LOCAL_MACHINE\SOFTWARE\Veeam\Veeam Backup and Replication\”

- AzureConcurrentTaskLimit

- Description: Amount of parallel HTTP requests for data upload (tasks) to Azure archive tier. Applied either on VBR server and applied automatically to all extents/gates, or can be set specifically on the extent/gate. Key on VBR server has higher priority.

- Default Value: 64

Initially, a value of 10 was set for ‘AzureConcurrentTaskLimit’, however, offloading backups were still failing with timeout errors. Only after dropping the value to 1 did the timeout issue stops occurring.

Once internet bandwidth was increased to 800Mbps for Veeam, the registry key value was changed back to 64. Testing showed the stability of SOBR offload job with faster links was substantially better than SOBR offload jobs with slower links pre-registry change but it would still experience a timeout once or twice a month with the new faster link. Roughly speaking it worked out to be less than 0.1% of all SOBR offloads per month experiencing a timeout on the faster link. The failure of a couple of offloads per month wasn’t a huge concern because the retry would always be successful but to ensure the best experience possible the ‘AzureConcurrentTaskLimit’ registry key was changed to 8 with the plan of increasing it slightly until a limit is found.

It’s worth mentioning that lowering the Azure concurrent task limit may reduce throughput but it’s more likely to saturate the network if the data being offloaded is not too small (small and numerous files will likely wait a little longer in processing stages than offloading stages).

Which version of Veeam you running? I have this issue but missing the registry key

You are required to create the registry key.